Category: outcomes

Cases, Containment & Collateral Damage

By Robert Nelson, MD January 13, 2022 We have a choice to make, and we need to make it soon. … More

Original Antigenic Sin: the Downside of Immunological Memory and Implications for COVID-19

I enthusiastically recommend this paper to my physician colleagues (or anyone) who desire a more detailed and nuanced understanding of … More

Misinformation About Natural Immunity: Part 2

by Robert Nelson, MD, publisher Forum for Healthcare Freedom Misinformation #2: Vaccine immunity is superior to COVID natural immunity. … More

Vaccine mandate for health care workers halted nationwide by Louisiana judge – Iowa Capital Dispatch

“A federal judge in Louisiana issued a ruling Tuesday blocking nationwide the Biden administration mandate requiring millions of health care … More

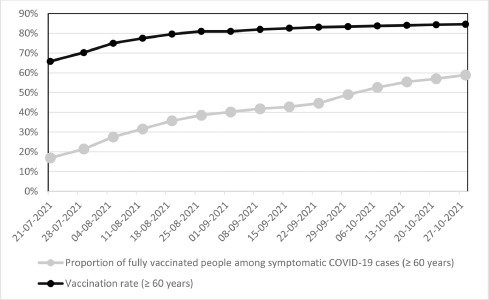

The epidemiological relevance of the COVID-19-vaccinated population is increasing – ScienceDirect

TRANSLATION: As time since vaccination elapses, the percentage of vaccinated individuals who contract SARS-CoV-2 virus and pass it to another … More

Misinformation About Natural COVID Immunity: Part 1

by Robert Nelson, MD Misinformation #1 Mild COVID cases result in short-lived or weaker immunity It is not uncommon in … More

Embrace the Naturally Immune: Hire Them – Don’t Fire Them

by Robert Nelson, MD From the early months of the pandemic, many physicians, epidemiologists and scientists have been speaking out … More